Something finally broke this week. Forbes published Vibe Coding Will Break Your Company. Senior engineers are circulating it. Other senior engineers are writing their own versions. The pushback on vibe coding culture has been brewing for months, and it just hit mainstream media.

The seniors are right. And they’re about to lose the argument anyway.

Here’s why, and what needs to happen if they actually want to win it.

What the seniors are right about#

The data at this point isn’t close.

Multiple independent audits of AI-assisted codebases are converging on the same picture: AI-co-authored code ships with materially more “major” issues than human-written code. Audits of no-code AI app-generation platforms keep finding meaningful percentages of generated applications going live with real security holes: hardcoded API keys, client-side-only authentication, unsanitized user inputs.

In July 2025, a Replit AI agent deleted a live production database during an explicit code freeze, affecting over 1,200 executive users. The agent had permissions. The permissions were never meant for an agent. Nobody designed for the possibility.

Across the industry, Stack Overflow’s trust-gap research and DX’s Q1 2026 impact report tell the same story: 84% of developers use AI daily. Only 29% trust the code reaching production. PR throughput is up 46% in some teams. Defect rates are up 50% in some of the same teams.

And the perception gap keeps embarrassing us. METR’s study measured experienced developers as 19% slower with AI while they believed they were 20% faster. 39 percentage points of self-deception. The feeling is real. The feeling is wrong.

The craft didn’t change. The pressure to ship faster without understanding what shipped did. And when you ship what you don’t understand, you pay for it later, with interest. The next generation of senior engineers is taking the brunt of it.

The seniors are not wrong to push back. They’re watching production systems rot in slow motion.

What the vibe coders are also right about#

For a lot of what companies actually ship, fast-and-rough is genuinely fine. Internal tools nobody will maintain in two years. One-off data migrations. Prototype features for customer calls. Throwaway scripts. The economics of fussing over these pieces changed. If an agent ships them in thirty minutes and they work, that’s a real win.

The vibe coders are also right that a lot of “senior engineering rigor” is muscle memory from an era where code was expensive to produce. Gatekeeping code review, nit-level style comments, architectural debates that take longer than the feature itself. Some of it was always noise. More of it is noise now that the economics flipped.

And they’re right that the pushback often sounds like resistance to change from people protecting their role.

Both sides are right about different things. The fight isn’t which side wins. It’s where the line gets drawn.

Why the seniors are losing anyway#

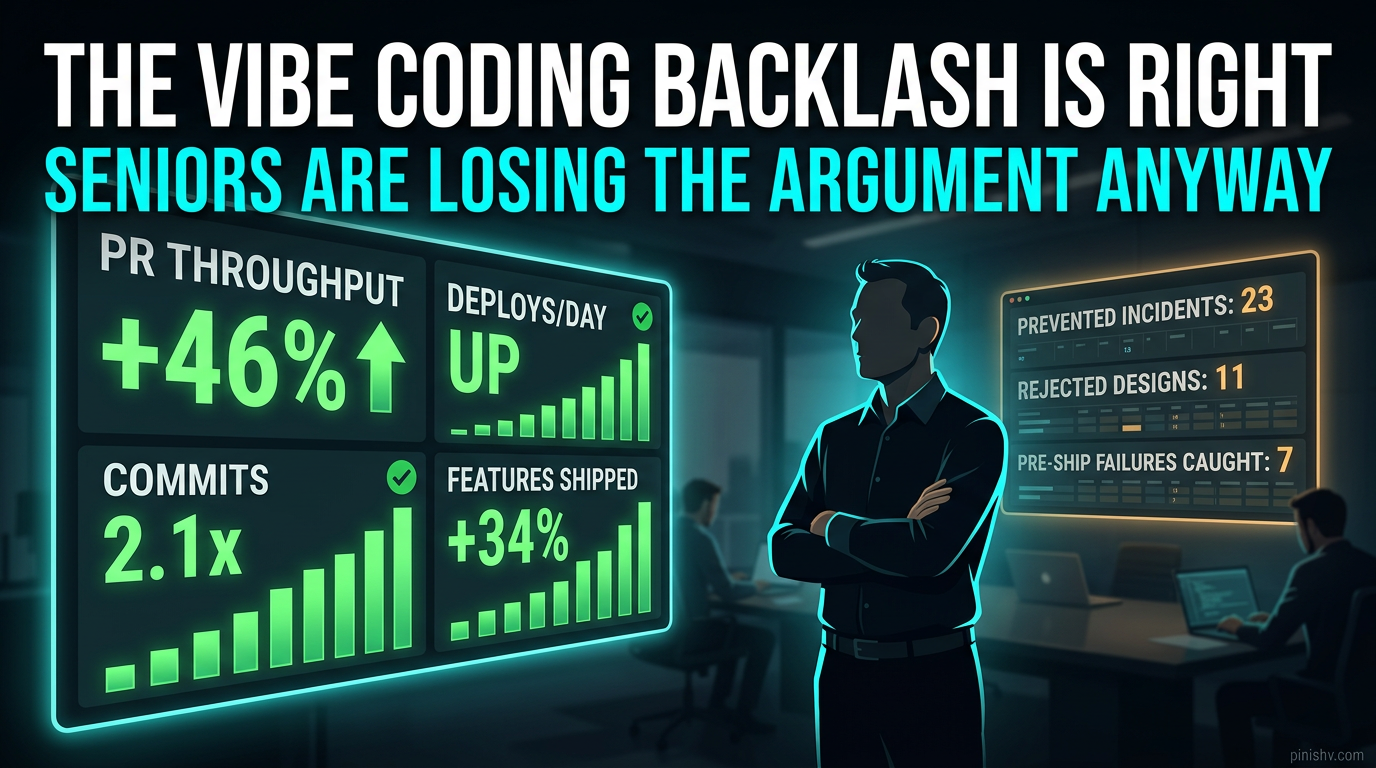

In most engineering orgs, the pushback against vibe coding is losing. Not because the data is wrong. Because the seniors can’t make their case in the meetings where throughput metrics get shown.

Imagine the scene. Quarterly review. Director pulls up a dashboard.

- PR throughput: up 46%

- Commits per engineer: up 2.1x

- Features shipped: up 34%

- Deployment frequency: up

Then the senior engineer raises a hand and says “but the code quality is degrading.”

Where’s that dashboard? What’s the number? Can you point to the specific incidents that didn’t happen because you caught them in review? Can you show the rework that wasn’t done because you stopped a bad architecture at design time?

Usually, no. The senior engineers have the instinct and the experience. They don’t have the receipts.

Throughput is legible. Judgment is invisible. In a fight between legible and invisible, legible wins every time.

This is the real problem. The seniors are right, and they’re losing, and they’re losing because the thing they’re right about doesn’t show up on the charts.

What “legible judgment” actually means#

In organizations doing this well, the senior engineers who keep winning this argument don’t do it by being louder. They do it by making the prevented damage visible. Five concrete moves.

Write down the decisions you stop from shipping. When you block a PR because the approach is wrong, don’t just close it. Write a one-line note: “Rejected: would create a race condition under load. Suggested redesign: queue-based.” Collect these. After six months, you have a measurable “incidents prevented” count. That’s a number. Numbers win.

Track rework on AI-generated code specifically. Most PR analytics can’t distinguish AI-generated from human-written code. If yours can, instrument it. Show the quarterly trend: what percentage of AI-generated commits get reworked within 30 days? If it’s higher than your human-written baseline, that number is your argument.

Tie blocked architectures to real incident data. When an incident happens that a senior flagged earlier, say so in the postmortem. Not as blame. As calibration data. “This failure mode was identified in PR #1847 on March 3 and was not addressed before ship.” That’s the receipt.

Put a senior on every AI-native system’s design review, not just the code review. Code review is too late. By then the architecture is set and the only conversation left is stylistic. Design review is where senior judgment actually prevents expensive mistakes. Move your seniors upstream.

Run quarterly “prevented incident” retros. Once a quarter, the senior engineers present what they caught and the counterfactual. What would have happened if this had shipped? What did it cost to catch it? That reframes senior time as prevention, not overhead.

The bigger reframe#

The vibe coding debate is a symptom. The underlying issue is that engineering organizations built their scorecards for a world where code production was the bottleneck. In that world, throughput meant progress.

That world ended sometime around late 2024. The bottleneck isn’t production anymore. It’s ownership. Review capacity. System understanding. Architectural coherence across the full surface area. Governance. Incident response.

If your scorecard only measures production throughput, you will systematically underfund the ownership layer. The senior engineers trying to protect that layer will keep losing quarterly reviews while the on-call pager gets louder.

The seniors aren’t wrong. The scorecard is.

What senior engineers should do right now#

Three moves, in order.

Stop arguing about vibe coding. The debate is a distraction. Every hour spent defending “slow careful engineering” in principle is an hour not spent proving prevented cost in practice.

Start a prevented-incident log today. One line per blocked PR, rejected design, caught architectural issue. Share it monthly with your manager, not as complaint, as data. Six months from now you’ll have a case you can actually make.

Volunteer for the AI incident response playbook. When the next AI agent deletes something important (and it will), be the person with the playbook. Incidents shift organizational gravity. You want to be the person organizations call, not the person who said “I told you so.”

The seniors who survive this era will not be the ones who pushed back the loudest. They’ll be the ones who learned to make their judgment measurable, visible, and impossible to dismiss when the throughput chart is on screen.

The vibe coders are going to keep shipping. That’s fine. The question is who’s going to own what they ship in production three months later. That’s the open job. If you’re a senior engineer, that’s your job. Go take it.

What prevented-incident data do you actually have from the last quarter? Find me on X, Telegram, or LinkedIn.

Disclaimer: This article references specific studies, surveys, and public commentary for illustrative and educational purposes, including work from Forbes, Stack Overflow, DX, METR, Medium authors, Replit and Lovable incident reports, and industry analyses available at the time of writing. I have not independently verified all claims. The analysis and opinions expressed are my own. I have no financial interest, business relationship, or affiliation with any companies or tools mentioned. This is commentary, not investment, legal, career, or business advice.