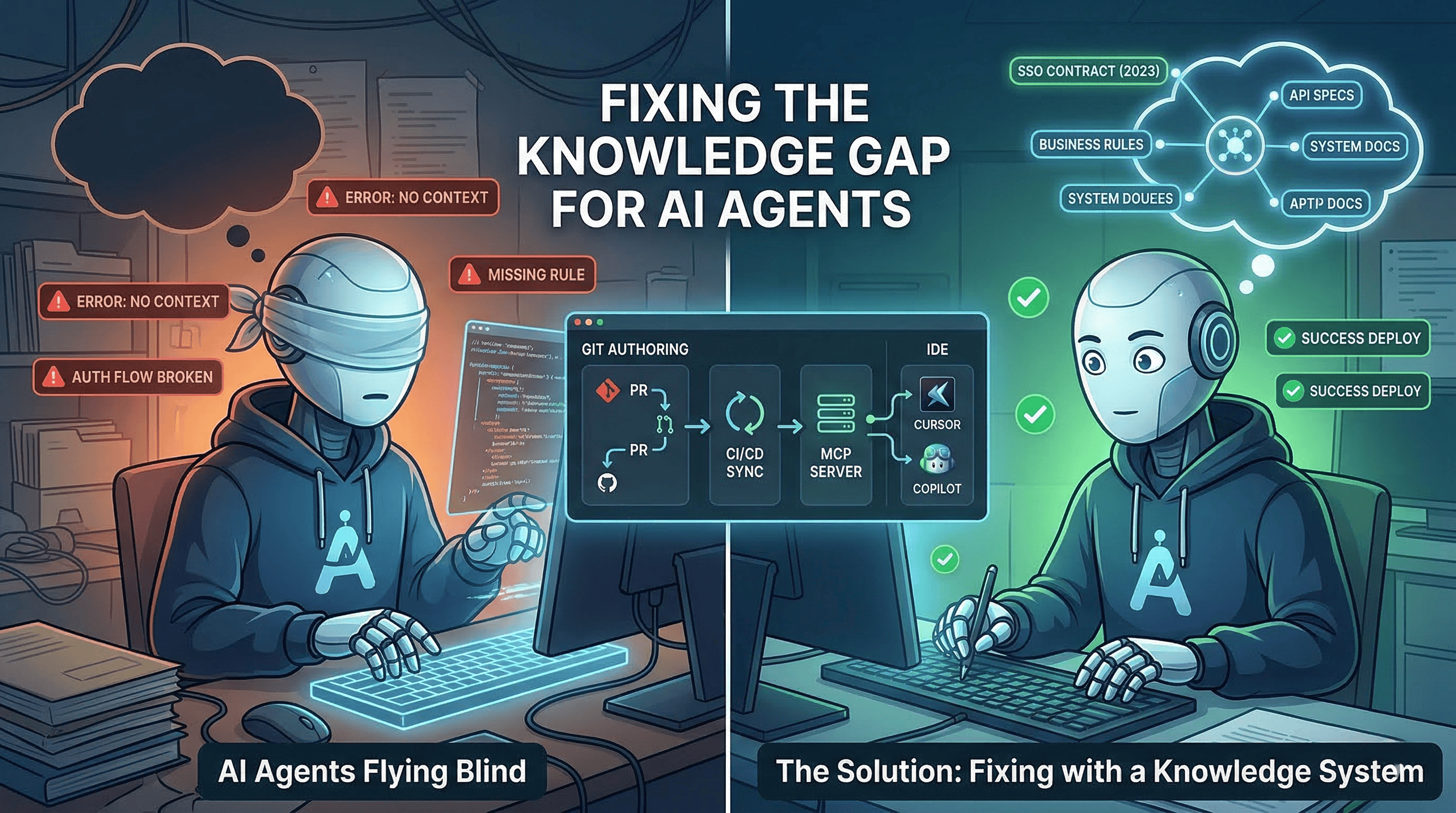

Your AI agent just rewrote the authentication flow. The code is clean. Tests pass. The PR looks great.

One problem: it broke the SSO integration with three enterprise customers because it didn’t know the auth service has a contract with the identity provider that requires a specific token format. That contract lives in a Slack thread from 2023 and one engineer’s head.

The agent didn’t make a mistake. It made a perfectly reasonable decision with the information it had. The information it had was almost nothing.

This is happening across your codebase right now. Not just with authentication. With everything. Business rules, API contracts, deployment constraints, database conventions, service boundaries. Your agents write code that compiles, passes tests, and violates assumptions that live nowhere except in people’s heads and scattered documents nobody maintains.

I’ve written about why context is the fundamental problem in AI. I’ve written about putting AI agents on the org chart and managing them like team members. But none of that matters if the agents start every session blind.

If you’re running agents in production, this is the problem you need to solve next.

Two teams, same agents, wildly different results#

Let me describe what I’m seeing.

Team A has agents embedded in their development workflow. An agent picks up a ticket to add a new validation rule to the user registration flow. Before writing a line of code, it queries a knowledge base and gets back: the existing validation rules, the reason the email format check is stricter than RFC 5322 (because of a legacy migration), the API contract with the notification service, and the team’s convention for error handling. The agent writes code that fits. The PR gets approved on the first review.

Team B has the exact same agents, same models, same IDE. Their agent picks up a similar ticket. It reads the code in the repo, sees patterns, generates a solution. The solution uses a different error handling pattern than the rest of the codebase. It changes the validation response format, which breaks the mobile client. It adds a database column without following the team’s migration conventions. The PR gets three rounds of review comments and a refactor.

Same AI. Same capability. Completely different outcomes.

The difference isn’t the model. It’s that Team A solved the knowledge problem and Team B didn’t.

Where knowledge actually lives (and why that’s broken)#

In most engineering organizations, critical knowledge is scattered across:

- People’s heads. The worst possible storage medium.

- Slack threads. Searchable in theory, buried in practice.

- Confluence pages. Written once, updated never.

- Code comments. Spotty at best, misleading at worst.

- Tribal knowledge. “Ask Daniel, he built that service.”

None of this is accessible to AI agents. None of it is structured for retrieval. None of it stays current.

And here’s the compounding problem: as AI agents do more work, the knowledge gap matters more, not less. When humans wrote all the code, at least the person writing it carried the context. When agents write the code, the context has to come from somewhere else. Or it doesn’t come at all.

Think about it this way: a senior developer who’s been on your team for three years carries hundreds of micro-decisions in their head. Why the payment service retries exactly three times. Why the user permissions check happens at the API gateway, not the service layer. Why that database query uses a specific index hint. Now imagine replacing that developer with an agent that knows none of this. That’s what you’re doing every time an agent starts a session.

The wrong way to fix this#

The instinct is to throw more code at the agent. Bigger context windows. More files in the prompt. RAG over the entire codebase.

I’ve seen teams try this. Here’s what happens:

They dump the entire repo into the context. The agent drowns in irrelevant code and can’t find the signal, and every token costs money, so you’re paying premium rates to confuse your own agents. They build RAG over Confluence. The retrieval returns pages from 2021 that contradict how things actually work. They write massive README files. Nobody maintains them. Within three months they’re more misleading than helpful.

And the costs compound. More tokens in the context means higher API bills on every single request. Bad context leads to wrong code, which leads to longer review cycles, which leads to rework, which means more agent sessions with the same bad context. It’s compound interest working against you. Every layer of waste multiplies the next.

The problem isn’t volume of information. It’s the right information, maintained, structured, and delivered at the moment the agent needs it. Get this wrong and you’re not just getting bad code. You’re paying more for it with every iteration.

What actually works: a developer knowledge hub#

After months of thinking about this problem and looking at how every available solution falls short, I believe the answer is a system with three components that work together.

Developers author markdown: product rules, system docs, architecture specs, skills

Chunk, embed, index → semantic search

Generated context + reusable workflows

One server → every IDE & agent can query knowledge

Git for authoring#

Not Confluence. Not Notion. Not some SaaS product with its own editing UI.

A Git repository. Markdown files. Pull requests for review. CI/CD for automation. The same workflow developers already use for code.

Why Git? Because the adoption problem kills every knowledge initiative that requires developers to learn a different tool. PRs already have review workflows. Blame shows who wrote what. History shows when things changed. CODEOWNERS controls who can approve what. Your developers already know all of this. Zero adoption friction.

The repo holds four types of knowledge:

Product knowledge. Business rules, domain logic, edge cases, validation requirements. Why the user registration flow requires that specific email format. Why the discount calculation has a different rounding rule for enterprise customers. This changes every sprint.

System knowledge. Build commands, repo structure, coding conventions, database patterns, module boundaries. Why you always run migrations before the test suite. Why the cache invalidation uses event sourcing instead of TTL. This changes when code changes.

Architecture knowledge. API contracts, data flows, service boundaries, system invariants. Why the payment service is the only service allowed to write to the transactions table. Why the notification queue has exactly-once delivery semantics. This changes rarely but matters enormously.

Operational skills. Code review checklists, debugging guides, feature scaffolding patterns, cross-repo change workflows. How to add a new API endpoint. How to set up a feature flag. How to run a database migration across services. How the CI/CD pipeline works, which checks run on PR, which run on merge, what gates production. How linting and formatting are enforced and what to do when a check fails. How to roll back a deployment. How to triage a failing build. These are reusable agent workflows that encode how your team actually works. Not just the code, but the entire delivery process around it.

Semantic search for retrieval#

Raw markdown is great for humans. Useless for agents that need to find the right three paragraphs out of thousands for a specific task.

This layer chunks the markdown by section, embeds it into vectors, and indexes it for semantic retrieval. When an agent asks “what are the validation rules for the registration flow?” it gets the relevant sections, with citations back to the source documents.

AWS Bedrock Knowledge Bases does this out of the box. So does Pinecone, Weaviate, or any vector store with a decent chunking strategy. The specific tool doesn’t matter. What matters is that knowledge becomes semantically searchable, not just keyword-matchable.

CI/CD syncs markdown to the search index on every merge. Knowledge stays current automatically. No manual re-indexing. No stale embeddings.

MCP for delivery#

Here’s where it comes together.

Your developers use Cursor, Claude Code, Copilot, Codex, Kiro. Probably several of them. Each one is an island. Each one starts every session without context.

Model Context Protocol (MCP) is the open standard that connects all of them. I wrote a deep dive on MCP earlier. If you haven’t read it, start there.

One MCP server wraps your knowledge base and exposes it to every IDE and agent through a standard interface. Build one server. Every tool connects natively. New tools that support MCP work automatically. Zero per-tool maintenance.

The server exposes three tools: search_knowledge for semantic search across all knowledge, get_document to fetch a specific doc by path, and list_knowledge_bases to discover available sources. Simple interface, massive impact.

Without MCP: You build a separate integration for each IDE. Maintain six connectors. Each tool gets knowledge differently. Every new tool means new work.

With MCP: You build one server. Everything connects. When the next AI coding tool launches next month, it just works.

The loop that makes it compound#

Here’s where this gets really powerful. The system doesn’t just serve knowledge. It grows.

via MCP

full context

knowledge repo

CI re-indexes

starts smarter

The workflow in detail:

- Agent reads. Before starting work, queries the knowledge base via MCP. Gets business rules, conventions, architecture constraints relevant to the task.

- Agent works. Develops with full context. The code actually follows the patterns and rules.

- Agent writes back. A built-in skill instructs the agent to capture what it learned during development and open a PR to the knowledge repo.

- Developer reviews. Standard PR review. Approves or refines the knowledge doc.

- CI syncs. Merged knowledge is automatically indexed. Next agent session starts smarter.

Knowledge capture becomes part of development, not a separate chore. The developer just reviews. No separate authoring step.

Every feature built makes the next feature easier. Every agent session makes the next session smarter. The knowledge compounds.

The AGENTS.md safety net#

Not every agent session has MCP access. Sometimes developers work offline. Sometimes a new tool doesn’t support MCP yet. Sometimes the knowledge server is down.

For these cases, CI generates a lightweight AGENTS.md in each repo. It’s a table of contents for the agent: what this repo does, how to build and test it, architecture boundaries, conventions and constraints, and where to find the full knowledge base.

Think of it as the offline fallback. Agents get essential context even without network access. Push model (always in-repo) complementing the pull model (on-demand via MCP).

Why nothing on the market solves this#

I looked at many solutions out there. Each solves a piece, and the approach I’m describing borrows the best parts from all of them.

Meta-repos (centralized Git docs). Git-native authoring, but no semantic search. Agents can’t find what they need.

Wiki + RAG (Confluence/Notion with retrieval). Searchable, but not Git-native. Developers won’t update it. Knowledge rots within months.

Code wikis (auto-generated from code). Clever, but usually tied to one AI tool. Not universal.

Cloud RAG services (Bedrock KB, Vertex). Managed search, but no authoring story. Where does the content come from?

Agent memory (Copilot memory, Letta). Per-tool, per-session. Not centralized. Not shared across the team.

You need all five capabilities in one system. That’s what this approach delivers.

How to start (without boiling the ocean)#

Day 1: Create the knowledge repo. Sit with your two or three most senior engineers, the ones who carry the most context in their heads. Ask them: “What do you find yourself explaining over and over?” That’s your first knowledge document.

Day 2-3: Set up semantic search. Connect your markdown to a vector store. Get retrieval working. This is not a multi-week project. The tooling exists. Use it.

Day 4-5: Deploy the MCP server. Configure it in your team’s primary IDE. Have a developer pair with an agent on a real task and compare the output to what they’d get without the knowledge base. That’s your first signal.

Week 2: Add the write-back loop. Build the skill that instructs agents to capture knowledge after completing work. Train your developers on how to review knowledge PRs, not just code PRs. This is where it starts compounding.

The technology side of this is days of work. The harder part is getting your team to treat knowledge as a first-class deliverable, not an afterthought. That’s a leadership problem, not a tooling problem. But once developers see their agents producing better code because someone took 20 minutes to document business rules, the culture shift happens on its own.

We’re in the AI era. If the infrastructure takes you months, you’re overengineering it. Get something working in days, iterate from there. The humans will make it great.

The key insight: start with the knowledge that hurts most when it’s missing. That’s usually the domain logic, the business rules that experienced developers carry in their heads and that agents get wrong in ways that look correct until they hit production.

The uncomfortable question#

If your AI agents are generating code without context, how much of that code is actually correct?

Not “does it compile” correct. Not “does it pass the tests you wrote” correct. Actually correct. Follows the business rules, respects the architecture, uses the conventions, handles the edge cases that burned you last quarter.

If you can’t answer that confidently, your agents aren’t helping as much as you think. They’re generating plausible-looking code that somebody has to review against all the unwritten knowledge that exists only in people’s heads. And you’re paying for every token of that wrong output, then paying again for the review, again for the rework, and again when the agent generates the same mistake tomorrow because nothing changed.

That’s not an AI problem. That’s a knowledge management problem. And it’s solvable.

The organizations that figure this out first will have AI agents that don’t just write code. They write the right code. Every time. From session one.

That’s the difference between AI as a novelty and AI as a genuine multiplier. And it’s what separates teams that are actually shipping with agents from teams that are just generating code and hoping for the best.

Building knowledge systems for AI agents? Thinking about MCP? I’d love to hear how you’re approaching it. Find me on X or Telegram.