I wrote last year that the developer’s work didn’t change, the sequence did. AI moved context gathering and scaffolding earlier. You opened your laptop to a draft instead of a blank file.

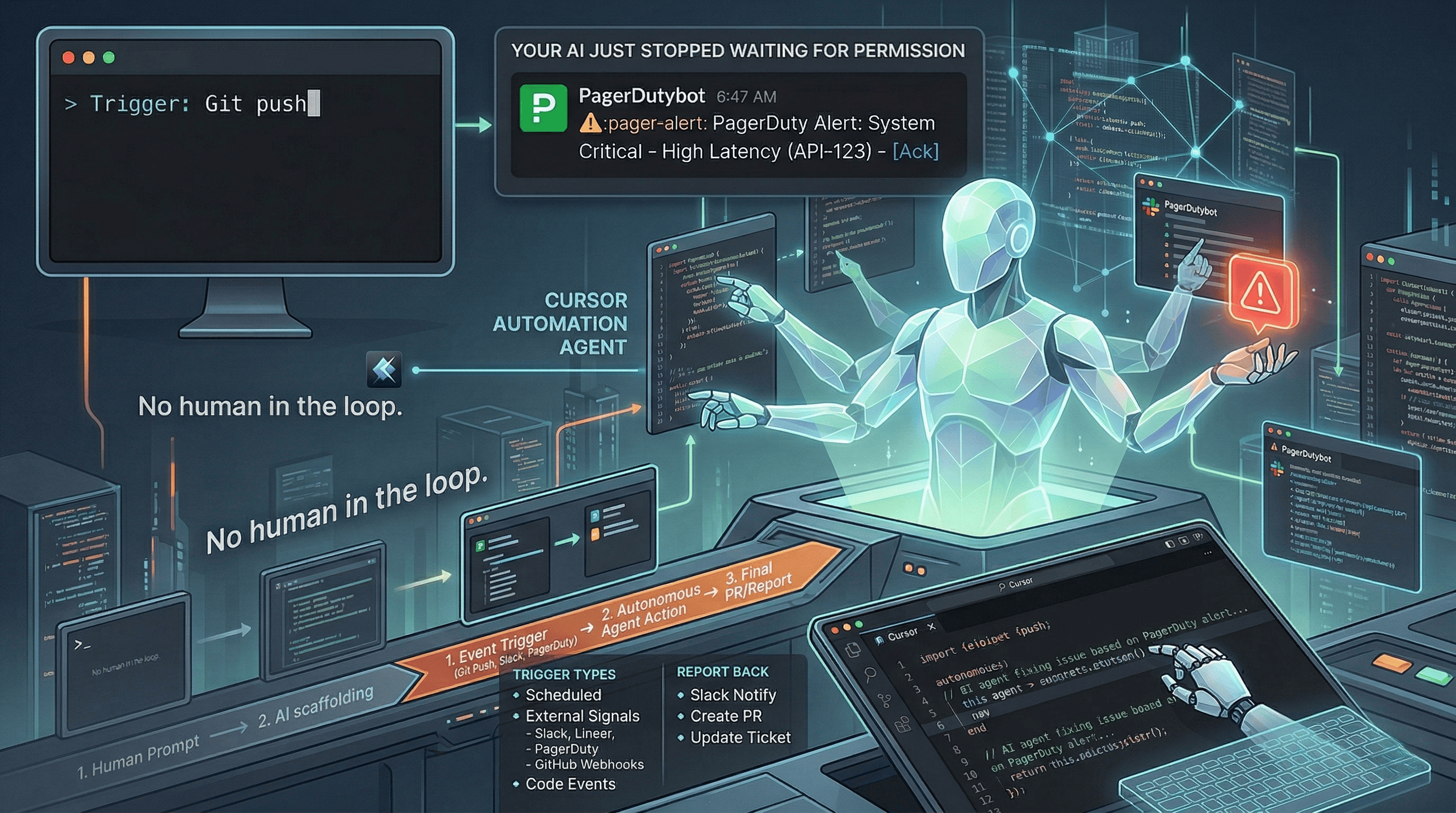

On March 5, Cursor moved the sequence again. Automations lets AI agents trigger without you prompting them. A Slack message, a Git push, a PagerDuty alert, a cron timer. The agent spins up a cloud sandbox, follows instructions you’ve defined, uses your configured MCPs and models, and reports back via PR, Slack, or ticket.

No human in the prompt loop. That’s a different category of tool.

What it actually does#

Three trigger types: scheduled timers (hourly, nightly, weekly), external signals (Slack, Linear, PagerDuty, GitHub webhooks), and code events (new PRs, branch pushes, test failures).

Cursor is already using this internally. Security reviews on every code push. Risk classification that auto-approves low-risk PRs. Incident response kicked off by PagerDuty alerts. Weekly repo change summaries. Bug report triage. Test coverage identification.

The agents also have a memory tool that lets them learn from past runs. So the security review agent that ran on Monday remembers context when it runs on Friday.

This isn’t an assistant waiting for your question. It’s a coworker that works a different shift.

How the others compare#

GitHub Copilot’s coding agent is the closest competitor. It already handles tasks end-to-end: assign an issue, the agent works autonomously, opens a PR. As of March 2026, agent hooks are in public preview, letting you run custom commands at key points during agent sessions. It also reviews its own changes before opening PRs and runs security scanning automatically. The big advantage is distribution: it lives where most teams already work (GitHub, VS Code, JetBrains). The limitation is that event triggers are still more constrained than Cursor’s broad webhook and Slack integration.

Claude Code is Anthropic’s terminal-based agent. It manages files, Git, shell commands, and tests independently of any IDE. Powerful for deep, autonomous coding sessions. But it doesn’t have event-driven triggers yet. You start it, it works, it finishes. There’s no “trigger Claude Code when a PagerDuty alert fires.” That gap will likely close, but right now it’s a different paradigm: on-demand autonomy versus always-on automation.

JetBrains Air launched the same month as an agentic development environment. It orchestrates multiple agents (Codex, Claude, Gemini, Junie) running in parallel in isolated containers. It’s the closest thing to “mission control for agents.” But it’s focused on delegating tasks and monitoring progress, not on event-driven automation. You still tell Air what to do. Cursor Automations lets the system tell the agent what to do.

Amazon Q doesn’t have event-driven features yet, but analysts expect an announcement soon. Given AWS’s strength in event-driven architecture (Lambda, EventBridge, Step Functions), their version could be interesting when it arrives.

Why this matters#

The shift from “I prompt the AI” to “the system triggers the AI” changes the organizational model for engineering teams. Security reviews can happen on every push without a human bottleneck. Triage can happen before anyone looks at their morning tickets. Maintenance tasks can run on a schedule nobody has to remember.

But it also means more code being generated and committed with less human involvement per change. If your team is already struggling with understanding what shipped (and the data suggests many are), autonomous agents running on triggers will accelerate that gap.

The teams that will get the most out of this are the ones with strong guardrails already in place: good CI, real tests, meaningful review standards, and engineers who understand the systems well enough to evaluate what the agent produced. The teams that will get burned are the ones hoping automation replaces the discipline they never built.

Cursor crossed $2 billion in annual revenue in about 18 months, roughly 20x faster than GitHub Copilot reached $100 million ARR. That’s not just hype. Engineers are voting with their wallets. Automations is the bet that the next step isn’t a better copilot. It’s an always-on agent layer that treats your codebase as a continuously monitored system.

The sequence changed again. The question is whether your engineering practices changed with it.

Using Cursor Automations or building event-driven agent workflows? I’d love to hear what triggers you’re running. Find me on X or Telegram.